Nash Equilibrium Calculator

Your Nash Eq.

Opponent Nash Eq.

Nash equilibrium calculations are done with a WASM version of the awesome lrslib library. In the case multiple equilibria are found, we only report the last one.

Introduction

When playing Starcraft 2 there is an inherent tradeoff between economy and protection from early game aggression. In the zerg vs protoss matchup for example, the zerg can usually guarantee a win if they go for an aggressive "12 pool" build and the protoss opponent doesn't scout.

Therefore, protoss players will usually scout by sending an early worker across the map to see if the zerg player built an early spawning pool. However this worker has an economic impact on the protoss player's economy since it would mine a significant amount of minerals if left at home.

If a protoss player never scouts, a zerg player can gain an advantage by occasionally going for a 12 pool, and vice versa, if a zerg player never 12 pools then a protoss player can gain an advantage by never scouting. So, the question is, how often should the zerg player 12 pool and how often should the protoss player scout to protect themselves against exploitation by the opponent? It's not immediately obvious that there is guaranteed to be an answer but a theorem proved by John Nash in game theory says their must be! This "optimal" strategy is called a Nash equilibrium.

If you randomly choose your strategy according to the Nash equilibrium, your opponent's best strategy will be to play the Nash equilibrium also and they cannot gain an advantage by changing their build order. You can think of playing at equilibrium kind of like "locking in" a given win rate. If your opponent changes their build order this win rate may stay the same or increase, but it will never decrease. Another way to look at the equilibrium strategy is to notice that if your opponent is not playing at equilibrium, then you can exploit that to increase your win rate.

Simple Example - 12 pool vs worker scout

To get familiar with the concept of a Nash equilibrium let's explicitly calculate it for the example given at the beginning of this post: a zerg player can choose to 12 pool or macro and a protoss player can choose to scout or not. To calculate the Nash equilibrium strategy let's start by assuming what fraction of the time zerg wins in the following cases:

- P(zerg win | 12 pool, no worker scout) = 100%

- P(zerg win | 12 pool, worker scout) = 20%

- P(zerg win | macro game, no worker scout) = 40%

- P(zerg win | macro game, worker scout) = 60%

P(zerg win | 12 pool, no worker scout) should be read as the probability that the zerg wins given that they 12 pool and the protoss does not worker scout.

Let's let x represent the fraction of games that the zerg goes 12 pool and y represent the fraction of games that the protoss worker scouts. If we are the zerg player, we want to know what is the probability that we win given x and y, or:

P(zerg win | x, y)

We can expand this since we already enumerated all the possibilties:

P(zerg win | x, y) = xy*0.2 + x*(1-y)*1 + (1-x)*(1-y)*0.4 + (1-x)*y*0.6

P(zerg win | x, y) = -xy + 0.6*x + 0.2*y + 0.4

Now, the Nash equilibrium should be a spot in this 2 dimensional space where neither player gains an advantage by changing their strategy. In this case the strategy for the zerg player is how often they 12 pool (or x) and the strategy for the protoss player is how often they worker scout (or y). So the Nash equilibrium would be something like a saddle point where both the x and y derivatives are zero (i.e. neither player can change the win rate by adjusting their own strategy). The derivatives can be calculated as:

d/dx(P(zerg win|x,y)) = -y + 0.6

d/dy(P(zerg win|x,y)) = -x + 0.2

If we set these both to zero we find x = 0.2 and y = 0.6, or the zerg should 12 pool 20% of the time and the protoss player should scout 60% of the time.

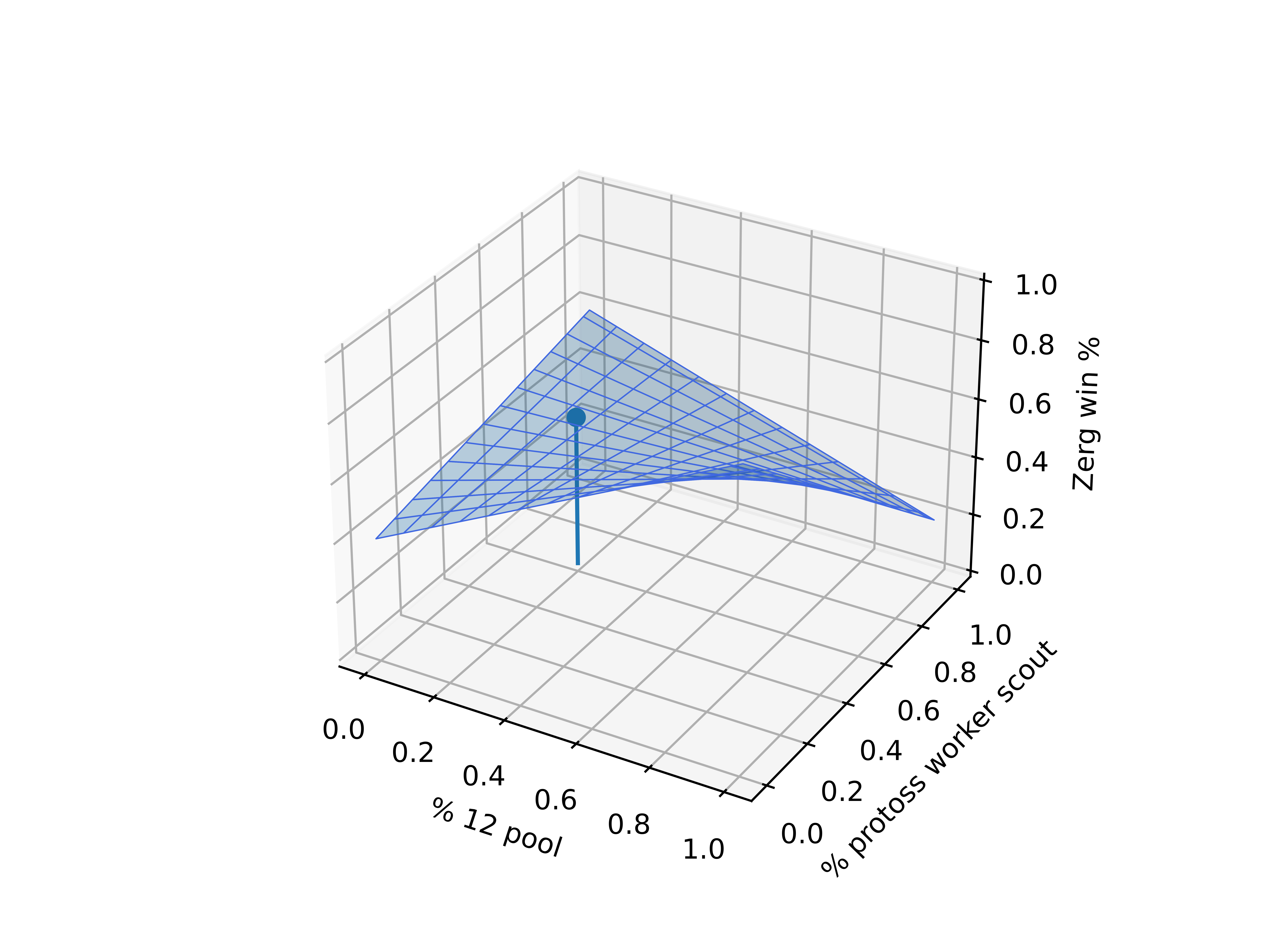

We can see this graphically by looking at the zerg win rate as a function of x and y:

It's a little hard to see, but the point at x = 0.2 and y = 0.6 is indeed a saddle point.

Equilibrium vs Exploitation

We previously calculated that the Nash equlibrium for the zerg player is to 12 pool 20% of the time. If the zerg player does this, their strategy is unexploitable, however this does not mean that it is the best strategy. For example, if the zerg player is going up against a protoss protoss player who always scouts, then you know that y = 1 and your win percentage is

P(zerg win | x, y = 1) = -0.4*x + 0.6

and to maximize this you should set x = 0, i.e. never 12 pool (and you'll win 60% of the time as opposed to 52% of the time in equilibrium).

This is called an exploitative strategy because you are exploiting the fact that your opponent is not playing near equilibrium.

A similar concept occurs in high level poker. There, instead of talking about Nash equilibrium strategies they talk about "game theory optimal play", but it's the same thing. In poker, by following a game theory optimal play strategy you guarantee that no player can choose a strategy which beats you. However, if you are playing against opponents that are not playing game theory optimal play it is not your best strategy and instead you should play a different strategy to exploit your opponents weakness.

Indifference Principle

You might have noticed something curious if you worked through the math in the previous sections. If you are the zerg player and playing at the Nash equilibrium, it doesn't matter what your opponent does, your win percentage will be fixed at 52%. In other words, if they scout 100% of the time you will win 52% of the time, if they don't scout 100% of the time you will still win 52% of the time. This is referred to as a weak Nash equilibrium (as opposed to a strict Nash equilibrium where any player deviating will lose more).

This seems odd given that poker programs playing close to the Nash equilibrium are able to beat pro poker players (see this news story). If a program is playing at the Nash equilibrium (and it is a weak equilibrium, which almost all poker strategies are), shouldn't opponents always "break even"? The answer is that a weak Nash equilibrium is indifferent only to those strategies with a non-zero probability. This is known as the indifference principle. For our simple example with the 12 pool and worker scout, all strategies had a non-zero probability in equilibrium, so if 1 player was playing at equilibrium, it indeed didn't matter what the opponent did. However, if there are more strategies and some of them should never be played at equilibrium, then playing those strategies will lower your win percentage against an opponent playing at equilibrium. Equivalently, in poker there are often times where the percentage of time you should bet, fold, call, etc. are zero, and it is in these cases that the game theory optimal program or player will beat players not playing at equilibrium.

Real games

How should you actually use these Nash equilibrium percentages in a real world setting? I think a good strategy would be something like this:- If you know your opponent is playing far from their Nash equilibrium, then you should just exploit that. The best response to their strategy will be a pure strategy (see this question on math.stackexchange.com). So, find the pure strategy which counters theirs and play it slightly more often than in equilibrium.

- If you are not sure of your opponent's strategy you should just play at the Nash equilibrium and you guarantee that your opponent cannot gain an advantage through build orders.

Randomly selecting strategies

It's fun to think about how you might randomly choose your strategy in game to make sure you are playing at equilibrium. If you have access to a clock the simplest method would be to look at the second hand at the start of the game. If your goal was to 12 pool 20% of the time, you can choose to 12 pool if it's below 60*0.2 = 12 seconds and otherwise play a macro game. You might also be able to select a strategy using some in-game randomness: your starting location, the in-game tips before the game, which side of a building your SCV is on, etc.